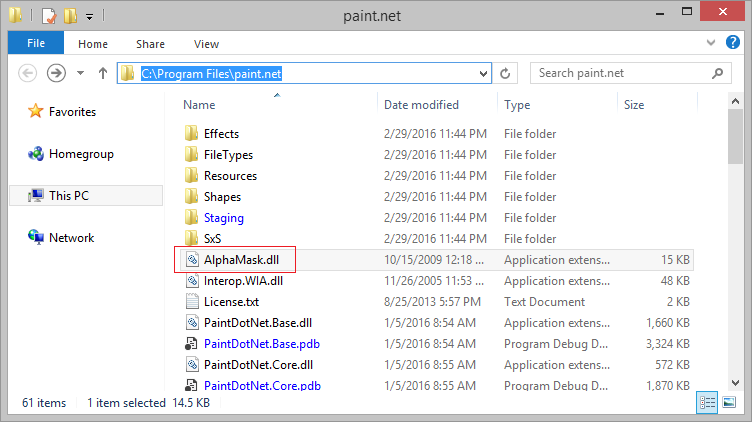

Fortunately, I aborted the install in time. Distribution channels include e-mail, malicious or hacked Web pages, Internet Relay Chat (IRC), peer-to-peer networks, etc.Īt this point, I stopped evaluating Paint.NET and rechecked my system for infections. The most common installation methods involve system or security exploitation, and unsuspecting users manually executing unknown programs. They are spread manually, often under the premise that they are beneficial or wanted. Unlike viruses, Trojans do not self-replicate. But ClamAV detected "-2".Īccording to McAfee (the anti-virus company, and not the person who founded the company), this is known malware. With Paint.NET, 19 out of 20 AV systems tested by Jotti detected nothing. If the malware isn't caught by one AV system, then maybe it will be caught by a different system. This is really useful since the best AV systems only catch about 60% of known malware. There's a web site called Jotti that will test any uploaded file against 20 different anti-virus systems. I decided to give the program's installer a thorough test before permitting it to continue. The software had passed my basic AV system, but I was still uneasy due to the warnings. With most programs, I expect one threat warning, like "You are about to run something downloaded from the Internet!" But two warnings? That's interesting. Specifically, it triggered two different threat warnings from the default Windows 8.1 operating system. However, installing it caused an unexpected result.

I downloaded their latest software from their dotPDN web site. I have never used this program before, but thought it was worth adding to my collection. It's not as common as Photoshop or Gimp, but it's common enough. I've seen samples of pictures that use this software. There's a common graphics program called "Paint.NET". While collecting common programs to test, I came upon a problem. Photoshop 7.0 doesn't have the same tool signatures as Photoshop CS2. Moreover, different versions of the software behave differently. There's Photoshop, Lightroom, Gimp, PicMonkey, Instagram, and so on. Using data from FotoForensics, I made a histogram of the various common applications. Or I can just archive whatever Linux distro shipped with that specific version and test from that archive when needed.)Įvery now and then, I revisit my tool collection. (Then I just need to worry about updates to all of the dependency libraries. If I want to test using Gimp 2.6.12, then I can download 2.6.12, compile it, and test it. In this regard, open source is a little easier to maintain than proprietary. If I want to know what a picture generated by Adobe CC looked like in October 2015, then I'll need to find sample pictures from around then and hope that they are representative. And good luck trying to archive versions from Adobe's Creative Cloud. If I want the stock version of Adobe Photoshop CS3, then I don't want it to automatically apply patches or upgrade versions. One of the big problems with maintaining a good test set of graphic editors is that they keep trying to update.

However, sometimes nothing beats having the real tools handy.

Some work can be done with samples created by other people. For photo analysis, this includes pictures as well as tools, like cameras and graphic editors. When doing research, it is essential to have test data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed